Commercial voice cloning for ads: a practical guide for marketers

Decide when to use commercial voice cloning for ads, evaluate vendor quality and rights, set consent and watermarking workflows, and run A/B ROI tests for safe deployments.

Advertisers want faster iterations and consistent brand voices — that’s why commercial voice cloning for ads is moving from experiment to production. This guide gives marketing teams, agencies, and independent creators measurable criteria to evaluate commercial voice-AI platforms, practical workflows to protect brands and rights, and A/B testing strategies to measure ROI when you deploy synthetic voices in paid ads.

Why advertisers are rapidly adopting voice cloning (cost, speed, scale)

Ad teams are under constant pressure to produce more creative variations, run geo- and language-targeted campaigns, and iterate quickly after performance data arrives. Commercial voice cloning for ads addresses three concrete bottlenecks: cost, speed, and scale.

- Cost: Hiring multiple human voice talents, booking studio time, and paying per-session rates becomes expensive when you need dozens of localized or variant reads. Synthetic voices reduce per-variant marginal costs once a clone or license is in place, making it practical to generate many ads and micro-targeted versions without repeated talent fees.

- Speed: Turnaround time drops from days (scheduling, recording, edits) to minutes or hours. That faster loop enables real-time copy testing and rapid reaction to campaign performance signals or market events.

- Scale: Synthetic voices let you produce consistent brand tones across languages and formats — from 6-second social spots to long-form narrated content — without re-casting for every market.

These operational gains are why platforms integrating high-quality cloning are being adopted across marketing teams and studios. But faster and cheaper doesn’t remove responsibility: later sections explain how to evaluate quality, secure rights, and manage risk so those efficiencies don’t create legal or reputational exposure.

How to judge voice-clone quality: MOS, speaker similarity, emotional range, and real-world tests

Quality assessment needs to be objective and tied to ad outcomes. Rely on a small, repeatable battery of measurements rather than subjective impressions alone.

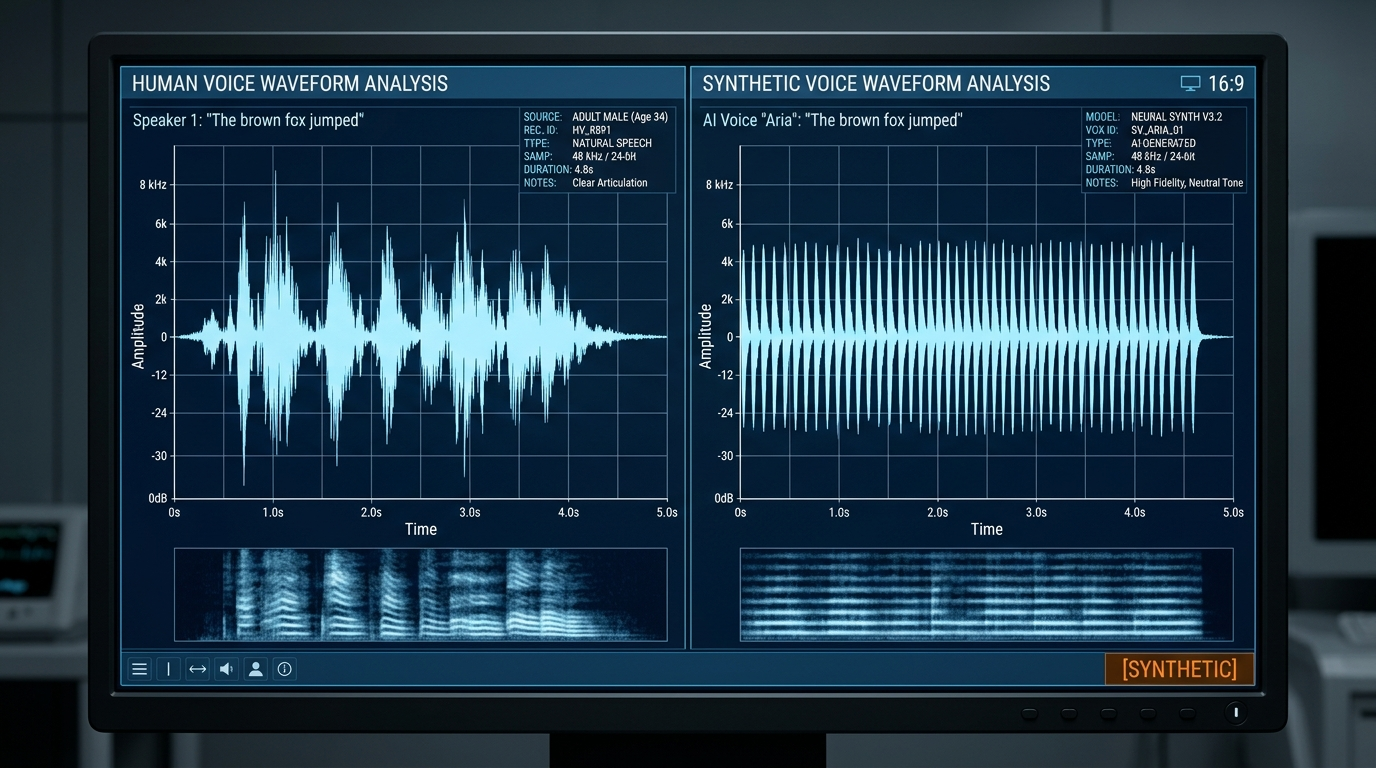

Start with Mean Opinion Score (MOS), the standard for perceived naturalness used in academic work and industry benchmarks (see the VoiceMOS Challenge). MOS lets you rank vendor outputs on a 1–5 scale and track improvements across versions. Complement MOS with CMOS (comparison MOS) if you plan AB comparisons between human and synthetic reads.

Speaker similarity is essential when cloning a specific talent: use a similarity score (many vendors provide one) or automated embeddings to quantify how closely the clone matches the target voice. For brand consistency, require a minimum similarity threshold in vendor SLAs.

Measure intelligibility with word error rate (WER) computed by transcribing synthetic speech. Low WER matters for message clarity — especially in dense ad copy or voice-first platforms.

Emotional range and prosody influence persuasion. Run controlled perceptual tests that request multiple intonations (excited, calm, urgent) and measure MOS per emotion. Recent shared-evaluation work (VoiceMOS Challenge 2024) shows objective predictors can track perceived naturalness across emotional content, so include these automated predictors in vendor evaluations.

Finally, run real-world listening tests with representative audience samples. Academic preprints and reporting show human listeners often fail to reliably distinguish high-quality voice clones from real human recordings — one Queen Mary study and related vishing research found near-zero discriminability (mean accuracy ~37.5%). That means high-quality clones can pass listener scrutiny, but you should still validate for your brand’s audience and use case using blind ABX or forced-choice tests to catch subtle failures in context.

Head-to-head: commercial voiceover AI platforms and what matters for ads (rights, pricing, languages, APIs)

Vendor selection should be a checklist combining objective quality and commercial terms. Top platforms widely used by marketers from 2024–2026 include ElevenLabs, Play.ht, Murf.ai, Resemble.ai, Lovo, and OpenAI TTS — independent comparisons highlight meaningful differences in naturalness, language coverage, pricing, and licensing.

Core evaluation dimensions:

- Naturalness and cloning accuracy: ElevenLabs consistently ranks at the top for cloning/naturalness in multiple 2026 comparisons; Play.ht and Murf are strong in production workflows and multi-language support. Run MOS and similarity tests for your scripts.

- Commercial rights and licensing: Confirm the vendor’s commercial-use terms for ads. Keep a written record that the plan you purchase explicitly permits paid advertising and redistribution. Industry reporting stresses this as a common pitfall.

- Pricing model: Compare per-minute, credit-based, or subscription tiers. For predictable volume, subscription or enterprise credits can lower marginal costs; for ad-scale spikes, real-time APIs with predictable pricing are valuable. When you assess cost, include studio editing time you’d otherwise pay.

- Language coverage and regional accents: Platforms vary widely — Play.ht and Lovo advertise larger language catalogs while others focus on depth per language. If your campaign targets multiple markets, prefer vendors with tested locale performance.

- API latency and real-time options: If you need dynamic personalization or programmatic creative optimization, prioritize vendors with robust APIs and low-latency streaming.

- Security, watermarking, and provenance: Ask whether the vendor supports audio watermarking or provenance metadata and what audit logs they provide for cloned voices.

To operationalize vendor choice, create a scorecard with MOS, similarity, WER, languages, licensing explicitness, API features, and pricing. For PlayVideo.AI customers, integrate voice assets into creative pipelines (see /ai-voices for cloning and /create-video to embed audio into video assets). Also review pricing models on /pricing so you can estimate production spend as you scale.

When to choose synthetic voice vs human voice in an ad (brand fit, regulation, trust)

The decision to use synthetic voice in an ad is not purely technical — it’s strategic. Use synthetic voice when it increases value without harming trust.

Choose synthetic voice when:

- You need many variants quickly (personalization, localization, multivariate testing).

- The brand voice must be consistent across formats or markets and a high-quality clone exists (confirmed by similarity and MOS thresholds).

- You require on-demand edits or last-minute copy swaps that would be costly with human talent.

Prefer human performers when:

- The brand relies on a recognizable human spokesperson whose persona is part of equity and must be authentic.

- Regulatory or platform rules require disclosure or restrict synthesized likenesses (some markets or publishers may demand explicit notice or restrict cloned celebrity voices).

- Trust and authenticity are core to the message (testimonials, sensitive topics, or spokespeople with strong audience relationships).

A hybrid approach often works: record a human for signature reads and create a vetted synthetic master for variations and localization. This keeps the primary endorsement authentic while capturing the speed benefits of cloning.

Finally, consider ad platform policies and local regulations. Some publishers or regions may require disclosure or limit use of cloned voices; plan to include provenance markers or short disclosures in the ad technical metadata or the creative as required.

Legal, consent and safety best practices for ad use (audio consent, watermarking, disclosure)

Risk control must be baked into procurement and production. Follow three legal and safety pillars: consent, watermarking/provenance, and clear licensing records.

Consent: Obtain explicit, documented consent from any speaker whose voice you clone. The consent record should specify permitted use cases (including advertising, geographic scope, and duration). Signed release forms or recorded verbal consent with metadata archived in your project management system are minimums. Industry reporting highlights scams and misuse risks; explicit consent reduces legal and reputational exposure (Axios reporting).

Watermarking and provenance: Use audio watermarking and provenance tags where supported. Watermarks let downstream platforms and auditors detect synthetic audio and are a recommended mitigation. Ask vendors about robust watermark options and whether they provide signed metadata or audit logs that prove creation time and account identity.

Licensing and recordkeeping: Keep copies of the vendor terms and proof that your plan covers commercial advertising. Keep invoices, license keys, and email confirmations in a legal folder tied to the campaign. This minimizes disputes and helps with platform compliance checks.

Disclosure: Where required by platform rules or local law, include a brief disclosure in the ad creative or in ad metadata. Even when not required, consider a subtle disclosure strategy for sensitive categories to preserve trust. If you must follow publisher guidelines, check their policies before launch.

Platform and safety checks: Run a pre-release safety checklist: confirm consent, confirm license, run watermarking, and verify the clone’s MOS/similarity passed your thresholds. These steps reduce the chance of misuse and align with recommendations from consumer-safety reporting.

Production workflow: cloning, fine-tuning, SSML, watermarking and delivery for ad platforms

Turn evaluation and policy into a repeatable production flow. A practical pipeline reduces mistakes and shortens delivery time.

1) Intake and consent

- Capture signed consent and baseline recordings for voice cloning. Store releases and reference audio in the project file so cloning is defensible.

2) Clone and baseline testing

- Submit reference audio to the vendor and request similarity and MOS baseline clips. Validate with automated MOS predictors and your blind ear panels.

3) Fine-tuning and SSML

- Use vendor tools and SSML to control prosody, pauses, and emphasis. For complex scripts, iterate: produce a draft, run a perceptual pass, and patch problem lines with phonetic hints or SSML tags. SSML helps match pacing requirements for 15–30 second ad spots.

4) Watermarking and provenance

- Apply vendor-supported watermarking and export provenance metadata. Embed identifiers in asset names and delivery manifests so your ad ops team can trace origin.

5) Mixing and music

- Generate or license background music (see /create-music for AI-assisted scoring) and apply mastering. Use separate stems for voice and music for easy updates and compliance with platform loudness specs.

6) Video integration and effects

- Combine voice tracks with visuals using /create-video and add visual assets from /create-image. If you rely on lipsync or avatar effects, test timing with voice masters and use /effects for pre-built sync templates.

7) Export and delivery

- Export stems with watermark intact, attach provenance metadata, and include licensing documentation. For programmatic ad platforms, include metadata fields and an audit trail. Maintain a staging release for A/B testing before full rollout.

This workflow balances speed and safety, letting you produce many variants while keeping control over quality and compliance.

How to test performance and measure ROI: A/B design, brand impact metrics, and fraud risk monitoring

Measuring synthetic voice performance must tie to business metrics and fraud controls. Design experiments that answer both creative and safety questions.

A/B and multivariate tests

- Use CMOS or direct click-through/conversion metrics for live A/B tests. Reserve statistical power to detect small but meaningful lifts (e.g., 5–10% CTR changes). When comparing synthetic vs human, run blind or masked experiments so audiences don’t change behavior because they know they’re hearing synthetic audio.

Brand impact metrics

- Track brand lift studies for ad recall, message comprehension (intelligibility), and trust. Use short surveys post-exposure to measure perceived authenticity and persuasion. These perceptual metrics complement MOS and similarity scores from production tests.

Attribution and conversion

- Measure downstream metrics (landing-page conversions, sign-ups, purchases) and include voice variant as a dimension in your analytics. For programmatic optimizations, prioritize variants that improve CPA while respecting compliance requirements.

Fraud and impersonation monitoring

- Monitor for misuse or impersonation complaints after launch. Maintain logs showing consent, license, and watermark proof. Use watermark detection in third-party monitoring to detect unauthorized reuse.

Cost-to-benefit tracking

- Compare spend on synthetic production (vendor fees, credits, editing time) against human recording costs and the revenue lift from improved personalization or faster iteration. Internal dashboards should show per-variant production cost, engagement lift, and ROI per campaign.

A final practical tip: keep a performance ledger per cloned voice (MOS, similarity, language, production cost, campaign ROI). That ledger helps you decide which voices to retain, which to retire, and when to invest in improved cloning or human re-record.

Frequently Asked Questions

Do I always need signed consent before cloning a voice for ads?

Yes. Obtain explicit, documented consent that covers commercial use and the geographic scope. Consent reduces legal and reputational risk and aligns with industry guidance on misuse prevention.

Can platforms reliably watermark synthetic audio?

Many vendors offer watermarking or provenance metadata; however, the robustness varies. Require watermarking and audit logs in vendor SLAs when using cloned voices commercially.

Will audiences notice high-quality voice clones?

At high quality levels, listeners often fail to distinguish clones from human recordings — studies report near-zero discriminability in some contexts. But you should validate with your target audience using blind tests to ensure brand-appropriate results.

Conclusion

Action checklist to deploy commercial voice cloning for ads safely:

- Run a vendor grade: collect MOS, similarity, WER, language coverage, API features, and licensing clarity. Use that to score vendors before procurement.

- Lock consent and licensing: get signed releases and store invoices and license terms in a campaign legal folder.

- Bake quality into production: follow the cloning→fine-tune→SSML→watermark→mix workflow and keep stems separate for edits.

- Test in-market: run blind A/B tests and brand lift surveys, track conversions and CPA, and maintain a ledger of voice performance and costs.

- Monitor and respond: use watermark detection and logs to address misuse quickly.

If you’re ready to prototype: test a single high-priority spot using a cloned voice alongside a human read, run MOS/similarity checks, and run a short A/B test. For rapid integration, use /ai-voices to generate voice assets, layer music from /create-music, and assemble final creatives in /create-video. Review pricing options on /pricing before scaling. These steps let your team capture the speed and scale of commercial voice cloning for ads while managing legal and trust risks.